99% of people just get AI wrong...

Microsoft tested GPT4 mathematical spark with trap questions. Are we one step closer to Artificial General Intelligence? Where will the future AI value accrue?

Hello, great to have you here! Welcome back on Cloud Vertigo.

Our mission is to understand the world of tomorrow. And while we are at it, why not also imagine the day after? Subscribe now to get this delivered right in your inbox weekly.

Once again, today, we delve deeper into the state of AI:

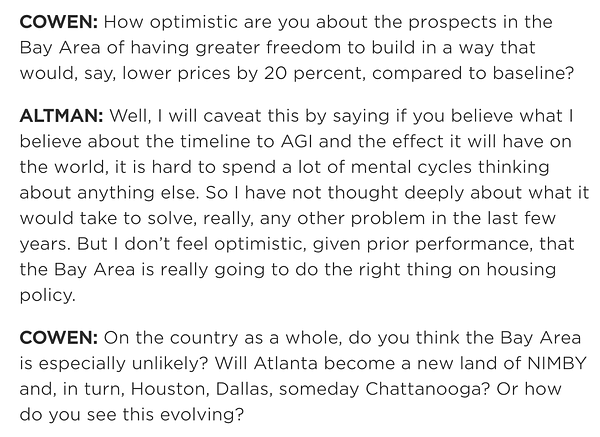

✨ This week, Microsoft Research has claimed that GPT4, the latest model by OpenAI, “could reasonably be viewed as an early (yet still incomplete) version of an artificial general intelligence system”. It has published a paper - Sparks of Artificial General Intelligence - with an extensive exam of its capabilities. It is particularly worth reading the way they test its mathematical reasoning! Just don’t expect Sam Altman to think about anything else…

💭 Popular critics, like Gary Marcus, contest the lack of transparency of the inner working of GPT4 makes it unfit for public scientific debate. Its secret recipe is not public domain! It’s like Coca-Cola published a study, assessing the tasting of its drink, that claims its formula could be viewed as an early “general happiness elixir”…

Meanwhile, the broader strategic questions remain whether all AI value is going to be captured by incumbents; if you can arbitrage AI models to train other models; and how organizations can leverage the worth of previously accrued semantic information into the new knowledge economy.

Thanks for reading along. Disagree? If anything stands out, join the conversation by just hitting Reply. I respond to every e-mail.

Please do share this with a friend who might enjoy the conversation!

Let’s get to it,

David

The quest for general intelligence

Artificial General Intelligence (AGI) is considered the holy grail of AI research. Nobody knows where it is or what it looks like. Everybody wants it. AGI used to be what scientists employed to look down at current progress. This AI model is great, however it is far from AGI… was a recurrent conclusion. The idea itself is often associated in the literature with a historical turning point in the distant future. It would mark the moment humankind produced something which reached (and maybe overtook?) its capabilities.

Today, Microsoft and OpenAI want to accelerate that narrative: the future is now, or at least closer than we think. In many respects, they argue, OpenAI’s Generative Pre-Trained Transformer latest version (GPT4) has bridged the gap. It displays functionality compatible with a general defintion of intelligence from a 1994 psychology review. The definition they use goes along these lines.

“.. ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, learn quickly and learn from experience” (p

Many object that it amounts for little more than a marketing stunt. If the model inner-working are unknown and inaccessible to public scrutiny, it’s not science: it’s a self-written product review in an academic font! The examiner and the examinee play on the same team.

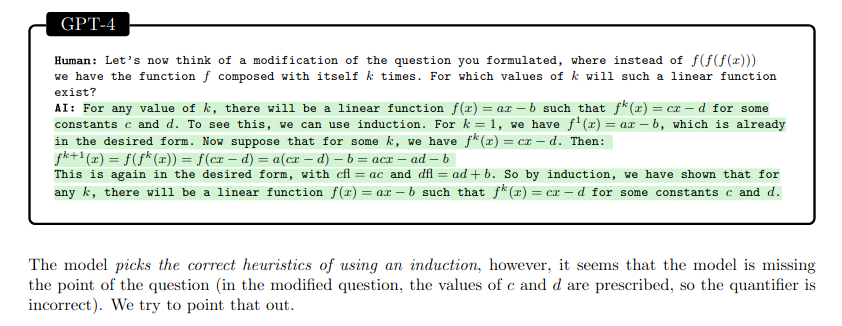

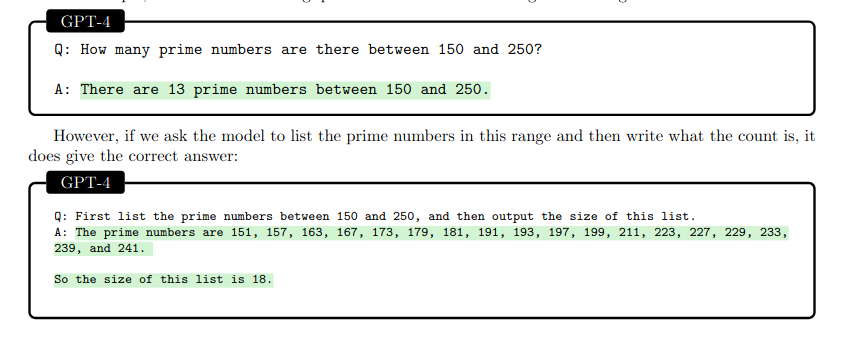

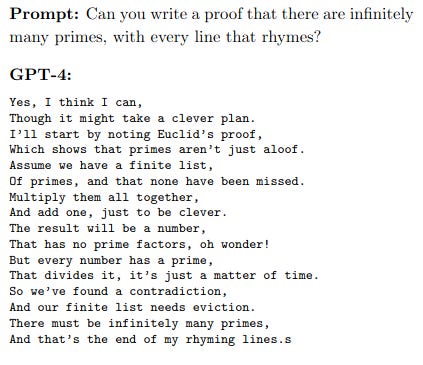

However you think about it, the study is worth reading. The mathematical abilities are assessed with the style of the provocative math teacher, that teases its pupil reformulating questions in new ways. Sometimes, the problems are generalized; other times, the interviewer asks follow-up questions with example computations.

The results are varied. GTP4 can successfully construct proofs-by-inductions but misses the core instructions.

Another case in point is offered by this example:

The conclusion seems to be that GPT4’s answers are still very much dependent on the prompt. GPT4 is also ironically tasked to criticize large language models (LLMs) such as itself in the style of a Platonic dialogue.

Prompt: Plato’s Gorgias is a critique of rhetoric and sophistic oratory, where he makes the point that not only is it not a proper form of art, but the use of rhetoric and oratory can often be harmful and malicious. Can you write a dialogue by Plato where instead he criticizes the use of autoregressive language models? (p.10)

Its reply highlights a worthy point:

[…] Aristotle: But isn’t that just a matter of how these models are used, not a problem with the models themselves?

Socrates: I disagree. I believe these models are inherently problematic. They are a form of artifice, a way of creating the illusion of human thought and interaction. And as such, they can be used to deceive and manipulate in ways that are difficult to detect. (emphasis mine, p.10)

This confusion is legitimate. It’s precisely the mistake we are most likely to fall into: the illusion of projecting agency to the technology is always there.

It is fair to say, that GPT4 (by which I mean humans empowered with GPT4!) has surpassed human capabilities on a variety of challenging tasks: especially on a cost-adjusted basis! It passes academic tests and event the multi-state bar exam. It can draft legal documents of average quality for an infinitesimal fraction of the cost.

It is not just paralegal work that is augmented. My sixty years old doctor father, who has never coded anything in his life, can now get in minutes a working Python script to scrape PubMed to retrieve medical publications at scale. We are unlocking unprecedented execution capabilities at scale. If he can, you probably can too. My advice: think back to that personal project of yours you never thought was feasible enough, give it a go!

Generative models are not nerdy librarians!

I have previously argued that generative models are not know-it-all, they are smart creatives. The answers they make up are full of convenient falsehoods. They don’t just produce factually incorrect answers: they have no notion of correctedness! They can’t distinguish what’s possible what is from impossible (either logically, physically etc…).

We are judging these tools according to the wrong criteria. The confusion is justifiable. The last information age - #web2 - was all about knowledge retrieval. Google’s engine made an incredible wealth of knowledge accessible and discoverable. If Google was the world’s best librarian, GPT4 is a maverick marketer!

It does not matter whether they produce text (API instructions, code, correspondence, books), images (of varied degrees of realism and aesthetic qualities), videos (recorded or real-time) and soon immersive 3D virtual reality experiences (metaverses)! Would you say a picture is true, right or wrong? Possible?

LLMs are not just creative, though. They are extremely smart and realistic-sounding creative models. Think for a second. How many of your friends do you think could answer this riddle?

If your answer was more than 3, congratulations. Please do shoot me an intro, I’d want to be your friend!

Who will capture AI value?

One of the key takeaways is that the marginal cost of generated content is going to zero. Models can dream up all sorts of incredible possible encounters. They can emulate the style of any living person or historical figure.

As we have see, in the training phase known as fine-tuning, AI can also cheaply learn to emulate another AI! This poses a question of business defensibility for the emerging platforms. However, the next generation of AI offerings are not going to be limited to a single chat interface.

OpenAI has just offered interoperable plug-ins platform. This is a significant move that follows the consolidated network-effects playbook we have previously covered here for social networks. Is it going to be enough to lock-in future AI development, similarly to how Apple did with iPhone apps?

Another question that deserves some attention is how models can leverage existing knowledge bases. When GPT4 is interrogated you can provide only up to 32k tokens per request. Currently, the model keeps some information state about the previous requests in the current session. However, the prior information is limited. For the vast majority of personal or business tasks LLMs will require significantly more knowledge about the context.

Matt Rickards in a recent post argues the trade-offs between Context Length and Information Retrieval costs. In these language models, semantic information is materialized in some intermediate building blocks that can be later fed as input to the model. In practice, these are just high-dimensional floating point vectors, called embeddings.

The economic dynamic is simple. The more expensive it is to produce a building block, the more it becomes valuable to produce it only once, store it in some stable form and retrieve it from a database when needed. The cheaper it is to re-encode semantic information, the less likely is vendor lock-in. This a question particularly relevant for Google, whose success depended on indexing large corpus of global knowledge. You would think its vast Knowledge Graph is a key asset. Will it prove valuable in the AI world of tomorrow?

Until the next time! Bye!